Category: Government

The Trump administration says it can’t process tariff refunds because of computer problems

The US Customs and Border Protection says it currently can’t comply with an order to process billions of dollars in refunds stemming from tariffs imposed by President Donald Trump. In a filing on Friday, CBP executive director Brandon Lord says the agency’s digital import processing system is “not well suited to a task of this […]

New York City lawmakers push sweeping restrictions on private sector biometric surveillance

New York City lawmakers are weighing a sweeping new attempt to curb the spread of biometric surveillance in everyday life, advancing legislation that would sharply restrict the ability of businesses and landlords to collect facial scans, voiceprints, fingerprints, and other uniquely identifying data from the public.

The proposals were the subject of a lengthy hearing this week before the City Council’s Committee on Technology where councilmembers, privacy advocates, and city officials debated whether biometric technologies have quietly expanded into retail stores and residential buildings with little oversight.

At issue were two bills that together would make New York City one of the most restrictive jurisdictions in the U.S. when it comes to private sector biometric surveillance.

The push reflects a growing concern among lawmakers that the technology has moved beyond narrowly defined security uses and is beginning to reshape the way businesses monitor customers and tenants.

Councilmember Shahana Hanif, the sponsor of one of the bills, argued that biometric identifiers present a fundamentally different privacy risk than ordinary data.

“You cannot cancel your face,” Hanif said during the hearing, emphasizing that biometric identifiers cannot be replaced once compromised.

The legislation responds in part to revelations that some retailers have begun experimenting with facial recognition systems designed to identify suspected shoplifters or repeat offenders.

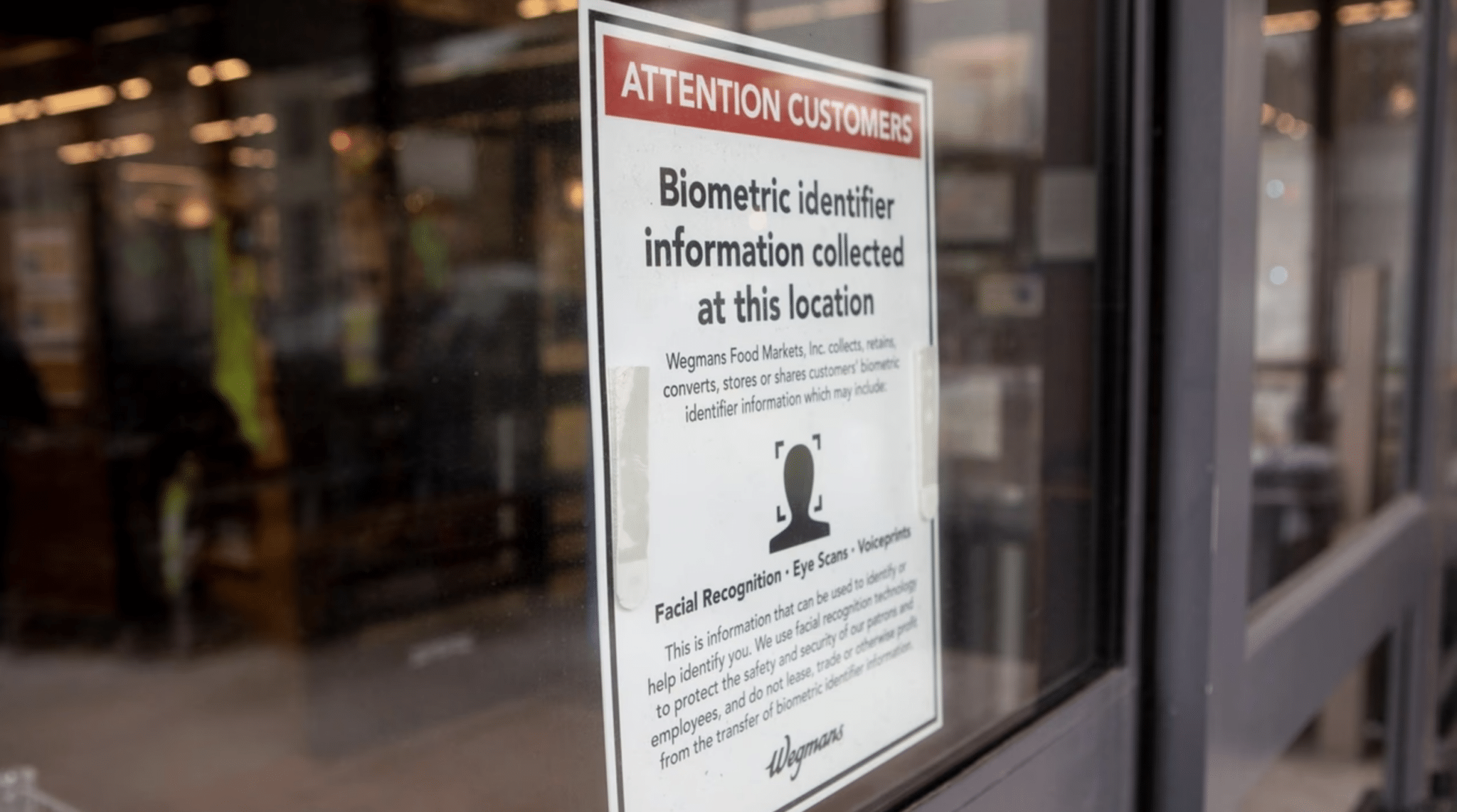

One example frequently cited by lawmakers involves grocery chain Wegmans, which has acknowledged using facial recognition technology in certain locations as part of its loss prevention strategy.

Under the first proposal, businesses that qualify as places of public accommodation would be barred from using biometric recognition technology to identify or verify customers.

The measure would go far beyond the city’s current rules, which mainly require businesses to post signs notifying customers if biometric information is being collected.

The bill would also expand how biometric identifiers are defined under city law. The definition would include not only fingerprints and iris scans but also voiceprints, facial geometry, and even movement patterns that could be used to identify an individual.

A second proposal introduced by Councilmember Pierina Ana Sanchez focuses on residential buildings. It would prohibit landlords from installing or using biometric recognition systems that identify tenants or their guests.

Lawmakers behind the measure say the growing use of facial recognition door entry systems in apartment buildings raises serious concerns about tenant privacy and potential surveillance inside private residences.

Together, the bills reflect a broader shift in the debate over biometric technology.

Earlier efforts in New York primarily focused on transparency, requiring businesses to disclose when they were collecting biometric data. The new proposals instead move toward outright prohibition.

Advocates for the legislation say that shift is necessary because disclosure alone has done little to slow adoption.

Civil liberties groups have warned for years that biometric surveillance systems can enable continuous tracking of people’s movements and associations.

Once deployed in retail stores or apartment buildings, they argue, such systems can quietly build databases of people who have done nothing wrong.

The debate gained additional momentum following several high-profile incidents in New York. In one widely publicized case, Madison Square Garden used facial recognition to identify and deny entry to lawyers affiliated with firms that were involved in litigation against the venue’s owner.

The incident highlighted how biometric tools could be used not just for security but also for private blacklisting.

At the same time, retailers have argued that biometric tools are becoming increasingly important for security as organized retail theft has grown more sophisticated.

Companies deploying the technology say facial recognition allows them to identify repeat offenders and prevent theft without requiring constant manual monitoring by security staff.

That argument did not persuade many councilmembers at the hearing, where lawmakers repeatedly pressed city officials and industry representatives about the risks of misidentification and data misuse.

The hearing also revealed gaps in the city’s own understanding of how biometric technologies are being used.

During testimony, representatives from the New York City Office of Technology and Innovation (OTI) acknowledged that the city does not maintain a comprehensive inventory of biometric data collection across agencies.

Alex Foard, OTI assistant commissioner of research and collaboration explained that the office only tracks certain technologies reported under Local Law 35, a 2022 New York City regulation requiring city agencies to annually disclose their use of automated, AI, or algorithmic tools that impact the public.

That disclosure system though does not capture every instance in which biometric data may be collected or stored. Some uses may fall outside the reporting framework, meaning that even city officials cannot fully account for how the technology is deployed across government.

“I do want to indicate that agencies could be using biometric data in ways that aren’t involved in algorithmic decision making or AI or other uses, in which case we would not have visibility into that collection,” Foard said.

The lack of clarity troubled several councilmembers, who argued that if the city government itself cannot fully track biometric technologies, it becomes even harder to regulate their use in the private sector.

The Office of Technology and Innovation did not take a formal position on the proposed bans but acknowledged the complexity of regulating rapidly evolving surveillance tools.

In written testimony submitted to the committee, the agency said it supports efforts to strengthen privacy protections while ensuring that legitimate uses of technology can still be evaluated carefully.

The debate also reflects a broader national trend. Across the U.S., lawmakers are grappling with how to regulate biometric systems that can identify people automatically through cameras, microphones, or other sensors.

Many of the existing laws focus on notice and consent requirements, requiring companies to disclose when biometric data is collected. Illinois’ Biometric Information Privacy Act, for example, requires written consent before companies can gather biometric identifiers.

New York City already has a limited version of that approach. Current city law requires businesses that collect biometric information to notify customers through posted signs, but it does not prohibit the practice itself.

Supporters of the new legislation argue that those transparency requirements have proven insufficient. They point out that most consumers either do not notice the signs or do not understand the implications of biometric tracking systems that can log and analyze their movements across multiple visits.

Opponents, however, warn that an outright ban could create unintended consequences.

Retail industry groups say the technology can help prevent theft and improve security for both employees and customers. Landlords have also argued that biometric entry systems can be more secure than traditional key fobs or passcodes, which can easily be copied or shared.

Still, the political momentum in New York appears to be shifting toward stronger restrictions.

Privacy advocates argue that facial recognition and similar tools create the infrastructure for constant monitoring, allowing private actors to track people’s movements through stores, apartment buildings, and other everyday spaces.

For councilmembers pushing more restrictive legislation, the stakes are about more than just consumer privacy. They see biometric surveillance as a technology that could fundamentally alter the relationship between individuals and the spaces they inhabit, turning routine activities such as shopping or entering one’s apartment building into moments of automated identification.

DHS quietly built pathway to track Americans through advertising data economy

For years, the Department of Homeland Security (DHS) quietly experimented with turning the digital advertising ecosystem into a surveillance tool.

Internal privacy records show that Customs and Border Protection (CBP) tested whether smartphone advertising identifiers collected by mobile apps and sold through data brokers could be used to reconstruct the movements of individuals across the U.S.

At the same time, other DHS components were purchasing similar datasets for investigative use, often without completing required privacy reviews or establishing clear policies governing how the information could be accessed.

Now, those efforts are drawing renewed scrutiny on Capitol Hill.

A coalition of lawmakers led by Senator Ron Wyden is urging the DHS Inspector General (IG) to investigate whether Immigration and Customs Enforcement (ICE) has resumed buying Americans’ cellphone location data from commercial vendors, potentially reviving a controversial surveillance practice the inspector general previously concluded violated federal law.

The congressional inquiry arrives as ICE surveys the private sector for new tools capable of harvesting and analyzing location signals generated by the digital advertising marketplace, a data pipeline capable of mapping the movements of millions of devices in near real time.

Together, the records suggest that DHS’s interest in commercial location intelligence is not a new development. It is the continuation of a strategy that has been quietly developing inside the department for years.

Documents reviewed in connection with the inquiry illustrate how that strategy has evolved inside DHS. One record, a Privacy Threshold Analysis (PIA) obtained by 404 media, describes an early pilot effort inside CBP that examined whether smartphone advertising identifiers, commonly known as AdIDs, could serve as a reliable investigative signal.

Another document reflects the mounting congressional concern that DHS components may still be purchasing commercial location data despite earlier oversight findings that identified legal and policy violations.

Taken together, the materials reveal a through-line connecting early experimentation with advertising identifier data, a major inspector general audit of DHS surveillance practices, and the current controversy surrounding ICE’s interest in new commercial location intelligence tools.

The PIA was for what CBP described as an AdID Efficacy Pilot. Privacy threshold analyses are preliminary reviews conducted within DHS to determine whether a proposed system or project requires a more detailed Privacy Impact Assessment (PIA) under federal law and departmental policy.

In this case, the document evaluated whether the proposed pilot involved privacy sensitive information and whether additional safeguards would be required before operational use.

The project focused on advertising identifier data generated by smartphones and mobile applications. Advertising identifiers are unique device level codes assigned by mobile operating systems and used by advertising networks to track user behavior across apps and websites.

Because the identifiers persist across sessions, they allow marketers to measure consumer activity over time. But the same characteristics that make AdIDs useful for targeted advertising also make them attractive for investigative analysis.

According to the privacy documentation, the pilot relied on commercially available datasets compiled from mobile applications that collect location signals through embedded software development kits.

These signals flow into advertising exchanges when mobile ads are served, where location data tied to a device identifier can be captured and aggregated by data brokers.

Commercial vendors then package that information into analytical platforms that allow users to query device movements over time.

The CBP pilot was designed to test whether those platforms could support border security investigations by revealing travel patterns associated with specific devices.

Analysts could examine location histories, identify devices that appeared together at particular locations, and reconstruct patterns of movement over extended periods.

Rather than functioning as a real time tracking tool, the system was intended to support retrospective analysis. By querying historical datasets, investigators could attempt to identify devices that had previously crossed certain locations or traveled alongside other devices of interest.

The privacy analysis also indicates that the system could access historical location data extending back several years, and that analysts could query data in ninety-day increments.

While the document emphasizes that the data originated in the commercial advertising ecosystem rather than from telecommunications providers, it also acknowledges the privacy sensitivity of the information.

Movement patterns derived from location data can reveal highly personal details about individuals, including where they live, work, worship, or seek medical care.

“Location data is extremely sensitive … It is for that reason that ordinarily, the government must obtain a warrant from a judge in order to demand such data from phone or technology companies,” the lawmakers’ letter states.

For that reason, DHS privacy officials concluded that the system qualified as a privacy sensitive program and would require a full PIA before broader operational deployment.

The document also noted that the pilot was connected to the DHS Intelligence Records System, a system of records that governs certain categories of intelligence information collected by CBP.

The internal pilot was not the only example of DHS components exploring commercial location intelligence.

In 2023, the DHS Inspector General performed a major audit examining how several DHS agencies had acquired and used what the report described as commercial telemetry data.

That category includes smartphone location histories derived from advertising identifiers and other commercial data sources.

The audit found that CBP, ICE, and the U.S. Secret Service had all obtained or used commercial location data without fully complying with federal privacy laws or departmental policy requirements.

Investigators determined that the agencies had procured or used the data without completing required PIAs in advance, a step mandated by the E-Government Act of 2002 when federal agencies deploy systems that collect or process personally identifiable information.

The review concluded that weak internal controls and insufficient oversight by the DHS Privacy Office allowed these acquisitions to proceed without the required safeguards.

The inspector general also found that DHS lacked a department-wide policy governing how commercial telemetry data could be purchased or used across components.

Without a consistent framework, different agencies were left to develop their own ad hoc rules for accessing and analyzing the data, the IG found.

The IG recommended that DHS develop a comprehensive department wide policy governing commercial telemetry data and strengthen controls to ensure privacy assessments are completed before such technologies are deployed.

The audit also raised concerns about how the data was being accessed internally. Investigators found examples of employees sharing database login credentials and supervisors failing to review audit logs that could reveal potential misuse. In at least one case, an employee used the data to track coworkers.

Those findings now form the backdrop to the new congressional request for investigation.

In the letter Wyden and fellow lawmakers sent Tuesday to the inspector general, they said contracting records and public reporting indicate ICE may have resumed purchasing Americans’ location data from commercial vendors even though DHS has not fully implemented the oversight reforms recommended in the earlier audit.

The lawmakers also pointed to a 2025 procurement involving the investigative analytics firm PenLink. According to the letter, ICE issued a no bid contract that included licenses for a location intelligence platform known as Webloc.

Webloc was developed by the data analytics company Cobwebs Technologies, which merged with Nebraska-based PenLink as part of a private equity acquisition valued at roughly $200 million.

An Israeli startup, Cobwebs had previously drawn controversy in the technology sector after Meta in December 2021 banned the company from its platforms during a crackdown on surveillance mercenary firms that were accused of targeting activists, journalists, and political figures.

The lawmakers’ letter states that ICE cancelled a scheduled February 10 congressional briefing about the contract at the last minute and has not provided further information about the purchase.

“ICE cancelled it with no explanation and without any offer to reschedule,” the letter states.

PenLink is not a new presence in federal law enforcement technology procurement. The company has spent decades supplying communications analysis platforms used by investigators to process call records, digital evidence and intercepted communications obtained through lawful investigative authorities.

Federal agencies including the Federal Bureau of Investigation, Drug Enforcement Administration, and numerous state and local law enforcement organizations have purchased PenLink software for digital investigative work.

Federal procurement records show that contracts for PenLink investigative platforms extend back more than a decade across multiple government agencies.

Many of the individual purchases appear relatively modest, often involving software licenses or maintenance agreements valued in the tens or hundreds of thousands of dollars.

However, larger enterprise deployments and multi-year agreements have pushed some contracts into the multi-million-dollar range.

When these enterprise contracts are combined with the dozens of smaller purchase orders issued across federal law enforcement agencies, the cumulative federal investment in PenLink and related investigative analytics platforms likely reaches well into the tens of millions of dollars.

The company’s merger with Cobwebs expanded that technology ecosystem into the commercial data analytics market, including platforms capable of processing open source intelligence and location signals derived from commercial datasets.

That convergence between investigative analytics tools and commercial location intelligence platforms is precisely what has alarmed privacy advocates and members of Congress.

The DHS IG report made clear that the department has struggled to establish consistent rules governing how commercial location data can be used.

the IG determined that CBP, ICE, and the Secret Service “did not adhere to department privacy policies or develop sufficient policies before procuring and using commercial telemetry data.”

Even after the audit, the department still lacked a comprehensive DHS-wide policy governing the acquisition and use of commercial location intelligence.

The renewed congressional scrutiny suggests lawmakers believe those gaps remain unresolved.

If ICE ultimately moves forward with new contracts for advertising technology-based location intelligence, the capability first tested quietly in a CBP pilot program could become a routine investigative tool across DHS.

At that point, the central question for policymakers will not be whether the technology works. It will be whether the rules governing its use are strong enough to prevent the commercial data marketplace from becoming one of the most powerful surveillance infrastructures available to the federal government.

The Munich Security Conference Marks the End of the US-Led Order

Carol Schaeffer

US politicians flooded the summit—but Europe no longer sees the United States as a reliable partner.

The post The Munich Security Conference Marks the End of the US-Led Order appeared first on The Nation.

U.S. Customs and Border Protection Signs Clearview AI Deal for Tactical Targeting

U.S. Customs and Border Protection has signed an agreement with Clearview AI to use the company’s facial recognition platform for tactical targeting and strategic counter-network analysis workflows, according to contract […]

The post U.S. Customs and Border Protection Signs Clearview AI Deal for Tactical Targeting appeared first on ID Tech.

Remember the Epstein Binder Photo Op? MAGA Influencers Hope You Don’t.

Fifteen influencers waved “Epstein Files” binders at the White House. When scrutiny widened to Trump, the outrage narrowed just as quickly.

The post Remember the Epstein Binder Photo Op? MAGA Influencers Hope You Don’t. first appeared on Mediaite.