Category: Biometrics

Eurail breach exposes passport data, fuels dark web identity trade

The fallout from a data breach at Eurail is raising fresh concerns about identity fraud, after stolen personal data from more than 300,000 customers surfaced for sale on the dark web.

The fear and anxiety caused by data breaches is playing out across Europe as reports show the insidious influence of the dark web and its sale of identities. The fallout from a Eurail data breach is rippling out, with the Dutch seller of Interrail passes for train travel across Europe left picking up the pieces.

A vast number of travellers have been affected and many are seeking to replace their passports at their own expense. Problems began with a cyberattack in December, when hackers accessed the personal details of more than 300,000 Eurail customers. The breach was severe in the personal details copied by the attackers.

Personal data such as passport numbers, names, phone numbers, email and home addresses and dates of birth were accessed. But things took a darker turn last week when Eurail confirmed that the stolen data was now being offered on sale on the dark web, with a sample dataset even posted on Telegram.

The revelation caused fear, anger and logistical headaches for many travellers. The Guardian reported a UK traveller being told by the Passport Office to cancel her passport, and who now faces paying more than £100 (US$135.52) for a replacement.

The European Commission undertook an investigation to find out the full scope of the Eurail incident and its potential impact. This was the result of DiscoverEU participants being involved, a youth scheme for funded travel across Europe, which is financed under the Erasmus+ programme. In January, an update said the European Data Protection Supervisor was notified about the personal data breach in accordance with regulations.

Gerard Tubb, a former journalist from Yorkshire, told The Guardian that the sheer volume of data stolen was enough for someone to convincingly impersonate him. Others have called for collective action to seek compensation under GDPR.

Eurail has urged customers to stay vigilant, update passwords and watch for suspicious messages, insisting it regrets the incident and is working to mitigate the impact. But for many, the apology is not sufficient. They argue that if their data had been properly protected, they wouldn’t now be facing the cost and stress of safeguarding their identities.

Eurail is still notifying affected customers but said that all those whose details appeared in the sample published on Telegram have been notified.

Dark web digital identity calculator puts focus on monetary worth

NordVPN has created a free calculator to determine how much your digital identity may be worth online. Users can input their country of residence, their personal documents and social media accounts, among other criteria. The VPN provider then calculates “your estimated identity value.”

According to NordVPN, dark web listings for identity documents such as passports and driver’s licenses are comparatively rare, with most IDs traded as digital scans. More sophisticated fraudsters may opt to purchase “fullz” — complete identity packages that include personal details like Social Security numbers, with the majority of fullz coming from the U.S. due to years of data breaches, which have driven down prices.

Other analysis has found that widely accessible dark web markets and forums offer low cost ways to assemble packages capable of defeating standard KYC checks. This booming trade in stolen and fabricated identities on the dark web is exposing weaknesses in biometric verification systems.

According to the sweep of more than 75,000 dark web market listings conducted by NordVPN and NordStella, hacked social media accounts retail for around $40 on the dark web. The majority of these are Facebook accounts, which account for up to 40 percent of all stolen accounts sold online. These logins can also allow access to linked Instagram accounts, business pages or advertising tools.

For ecommerce NordVPN found 125 Amazon accounts on sale, with an average price of $77, which was far in front as the leading ecommerce type on sale on the dark web. In second place were Walmart accounts with an average price of $31.82.

The NordVPN research pointed to the emerging threat of identities taken from gaming platforms such as Steam, Roblox and the PlayStation Network (PSN), with the average selling price of a Steam account being $88.75.

“Steam has become something of a gateway for young threat actors,” the report says. “Many known criminals started out reselling accounts in gaming forums before transitioning to more serious cybercrime.”

Financial accounts, perhaps as expected, had high average selling prices. Chase and Bank of America accounts were the leading and second-leading found on sale, with respective average prices of $619 and $417. Wise accounts had the highest average price of $803.

“Every online account you own has a price tag on the dark web,” said Marijus Briedis, chief technology officer at NordVPN. “Your streaming subscriptions, your email, your bank login, your social media profiles.”

“Most people would be shocked at how little it costs a criminal to buy their entire digital identity.”

AI regulation set to become US midterm battleground

The fight over AI regulation in Congress is becoming less a conventional technology policy debate than a struggle over who will control the legal architecture of a rapidly expanding surveillance and identity economy.

The broader political stakes are clear. AI regulation is becoming a proxy fight over democracy, federalism, religious nationalism, surveillance capitalism, and executive power.

The tech right’s effort to wrap AI acceleration in religious and civilizational language gives deregulatory politics an ideological force that ordinary industry lobbying lacks.

On one side are Republicans, allied with Silicon Valley right-leaning accelerationists, defense-tech investors, data brokers, and large platform companies who argue that the United States cannot afford a fragmented state-by-state regulatory regime while China races ahead.

On the other side are Democrats, privacy advocates, civil liberties groups, and state regulators who increasingly see AI not as a discrete technology sector, but as the connective tissue of biometric surveillance, immigration enforcement, criminal justice automation, algorithmic discrimination, data brokerage, and identity infrastructure.

Tech-right billionaires are courting the conservative Christian base that supports President Trump by arguing that AI is morally urgent, civilizational, and even divinely aligned. In that narrative, government regulation becomes not merely bad policy, but an obstacle to a providential technological mission.

This worldview can be seen in recent warnings by Palantir’s Peter Thiel, who has claimed that strict AI regulation will help usher in the Antichrist. The claim fuses apocalyptic religious imagery with deregulatory politics and turns technical governance into a culture war battleground.

That framing matters because it is emerging at a time when evangelical congressional Republicans and the White House are pressing for federal preemption of state AI laws.

The White House’s national legislative framework, backed by Executive Order 14365, Ensuring a National Policy Framework for Artificial Intelligence, issued on December 11, 2025, urges Congress to preempt state-level AI laws it describes as overly burdensome, inconsistent, and harmful to innovation.

Legal analysts have described preemption as one of the framework’s most consequential provisions because it would shift power away from states that have moved first on privacy, algorithmic accountability, children’s safety, and biometric restrictions.

This is the same pattern that has shaped the federal privacy debate for years. Congress has repeatedly failed to enact a comprehensive national privacy law, while states have moved ahead with their own statutes.

That state-driven model has frustrated business groups and large technology companies which have long sought a federal law that would create uniform national rules while overriding stronger state protections.

The AI preemption debate now echoes that unresolved privacy fight, with Congress again confronting whether federal legislation should establish a floor that states can build on, or a ceiling that blocks them from going further.

Between now and the November midterm elections, the most likely congressional outcome is an intensified AI preemption campaign.

Republicans will continue to use hearings, committee markups, and draft legislation to argue that state AI laws threaten innovation, competitiveness, national security, and U.S. leadership. The core message will be that a patchwork of state rules will slow deployment, weaken American companies, and give China an advantage.

That argument will be especially powerful in committees dealing with commerce, energy, national security, homeland security, and financial services, where AI is already being described as a strategic infrastructure issue rather than merely a consumer protection issue.

But even if Republicans control the legislative calendar before November, passage of a broad AI preemption bill remains uncertain. Some Republicans are instinctively hostile to regulation, but others are also sensitive to state authority, children’s safety, censorship claims, deepfakes, fraud, and national security risks.

Democrats are unlikely to agree to sweeping state preemption unless it is paired with strong federal privacy, civil rights, biometric, and algorithmic accountability provisions. And that makes the most plausible near-term strategy an attempt to attach preemption language to larger must-pass bills, or to move narrower measures through committee that can serve as campaign messaging.

AI is not developing in a vacuum. It is being embedded into biometric identity systems, smart glasses, border and immigration enforcement tools, predictive policing, fraud detection, age verification, digital identity platforms, and data analytics systems used by agencies throughout the Department of Homeland Security (DHS) and state law enforcement.

The real governance question is not whether AI should exist, but whether systems built with facial recognition, iris scans, behavioral analytics, massive identity databases, and commercial data streams will be subjected to enforceable limits before they become routine infrastructure. That is where a Democratic-controlled Congress would likely change the trajectory.

If Democrats take the House, they will gain subpoena power, committee control, and the ability to set the oversight agenda. If they also take the Senate, they will have a stronger platform to press legislation, although Senate procedure, industry lobbying, and Trump’s veto power would still limit how far they could go.

The first and most immediate shift would likely be oversight. Committees would be expected to investigate federal AI procurement, DHS contracts, data broker relationships, biometric identification programs, algorithmic decision systems in benefits and immigration adjudication, and the role of companies and other vendors operating in the identity and surveillance ecosystem.

A Democratic Congress would also likely revisit whether states should be allowed to keep moving faster than Washington. Rather than endorsing broad preemption, Democrats would be more likely to push a federal privacy and AI accountability framework that preserves stronger state laws.

That approach would align with the growing view that states have become the primary enforcement laboratories because federal momentum remains stalled and state regulators are stepping into the gap.

In practice, Democrats may try to pass a federal baseline covering data minimization, algorithmic impact assessments, transparency, civil rights testing, biometric consent, data broker limits, and restrictions on high-risk government use, while resisting any Republican effort to wipe out state rules.

The hardest question is whether Democrats could move beyond oversight into durable legislation. A House majority alone would give them hearings, investigations, reports, and messaging bills, but not necessarily enacted law.

Control of both chambers would create more room for legislation, but even then, industry opposition and internal Democratic divisions could narrow the result.

Large technology companies may accept some federal rules if they receive uniformity and liability protection in return. Civil liberties groups will oppose any bill that preempts stronger state laws or fails to address biometric surveillance and government use.

Moderate Democrats may support innovation-friendly compromise language, while progressive Democrats will likely demand stronger enforcement and private rights of action.

The result after November would probably be a two-track process. The first track would be aggressive oversight of AI deployment in government, particularly in homeland security, immigration, law enforcement, defense-adjacent technologies, and benefits administration.

The second would be an effort to build a federal privacy and AI accountability bill that does not simply codify industry preferences. That bill would likely include provisions on children, deepfakes, automated decision making, biometric data, data brokers, and high-risk AI systems.

Whether it becomes law would depend on the size of Democratic majorities, the Senate filibuster, presidential positioning, and whether public concern over AI-enabled surveillance, fraud, and political manipulation becomes intense enough to overcome industry pressure.

The expansion of AI into biometric and identity infrastructure gives Democrats a concrete oversight target. If Democrats take control, the center of gravity will shift toward investigations, state authority, civil rights, privacy, and limits on government and corporate surveillance.

If Republicans keep control, Congress is likely to keep pushing uniform national rules designed to protect rapid deployment and curb state intervention.

In other words, the next phase of the congressional AI fight will not be about whether AI should be regulated. It will be about whether regulations are written to discipline AI power or to protect it.

Armenia approves legal framework for biometric passport and ID rollout

The Armenian government has approved amendments to a package of laws related to identity documents, creating a unified legislative framework for implementing a biometric passport and ID card system.

The amendments to the law On Identity Documents were approved by the Cabinet of Ministers on Thursday, paving the way for consolidating different regulations on IDs into a single law, according to Minister of Internal Affairs Arpine Sargsyan.

“There are also plans to legislatively regulate the relationship between the state and the private partner as part of the implementation of the biometric system,” Sargasyan adds.

Armenia signed a private-public partnership (PPP) agreement with Haypass in April last year to implement the ID document system. Haypass is a consortium established in 2024 between Idemia Identity Security France and ACI Technology S.à.r.l. to develop the biometric ID infrastructure. The planned system includes biometric ID cards designed by IN Groupe for foreigners, stateless individuals and permanent residents. IN Groupe acquired the Idemia Smart Identity division last year.

Issuance is scheduled to begin in the second half of 2026.

The newly adopted amendments also bring other changes, including making ID cards mandatory for Armenian citizens aged 16 or older. All documents for foreigners, refugees and stateless persons will also become biometric.

An ID card will also be required to obtain a biometric passport, according to Sargasyan. The country plans to bring all travel documents into compliance with ICAO Standard 9303, she adds.

The country is implementing new biometric documents as part of the Visa Liberalization Action Plan with the EU, which requires reforms in document security, migration management, and other areas to secure visa-free travel to the Schengen area.

The new documents will also enable greater digitalization and encourage the use of digital services, according to the Armenian government.

Japan moves toward age verification for social media filters and risk labels

Japan’s policymakers are considering their own version of age assurance for social media with content filtering taking the limelight.

Nikkei Asia reports that Japan is considering age-based content filtering by default for social media companies to tackle addiction among minors. Japan is also thinking of creating a system to measure the risks of each platform.

While most companies often have filtering turned off by default, the Ministry of Internal Affairs and Communications wants social media providers to turn age-based filtering on from the start. The age ranges have yet to be established.

The ministry is considering age verification systems developed jointly with mobile carriers along with operating system providers. Mobile carriers in Japan already confirm customer identities when devices are purchased.

Under current Japanese law social media companies are only required to make an effort to promote appropriate use by minors, and the measures they adopt vary widely. Parents and guardians are also free to turn off filtering tools prompting doubts about the effectiveness of the existing framework.

In addition, the government is preparing a new evaluation system that would rate social media platforms based on risks such as excessive use or exposure to harmful content. The ratings would highlight features like content filters, ad‑display restrictions and time‑limit settings. This would enable users to quickly understand the risk profile of each service.

These proposals were presented at a meeting of an expert panel chosen by the communications ministry today with a final report expected as early as next month. Any resulting guidelines or legal revisions would then be developed by the relevant agencies, led by the Children and Families Agency.

In Asia, Indonesia banned social media for under 16s with the Communication and Digital Affairs Minister Meutya Hafid saying the regulation applies to around 70 million minors and framing it as a way to “reclaim the sovereignty” of children’s future.

Malaysia is preparing its own “digital seatbelt” in regulating social media for 2026 that could see identity verification technology and also MyDigital ID integration. This combination could result in the most rigorous checks on access to social media platforms for under 16s in the world.

Age assurance is dawning for social media with the light of civil liability potentially bathing this digital realm even if regulation stalls. It comes following millions of dollars worth of damages awarded by juries to plaintiffs in state trials in the U.S. The likes of Meta and YouTube were on trial for alleged addictive features such as infinite scrolling and algorithmic amplification.

Panasonic adds QR-based biometric onboarding to streamline site access

Panasonic Connect has introduced a new QR‑based face registration feature for its KPAS Cloud access management service. The new feature is aimed at streamlining biometric onboarding at factories, construction sites and other high‑traffic facilities.

In 2025 the Japanese company launched the KPAS Cloud Site Management Service. It uses Panasonic’s facial recognition technology to control entry and exit for contractors, staff and visitors.

The company says the new QR code feature is designed to remove one of the biggest operational bottlenecks as administrators previously had to collect face images in advance or register users on site.

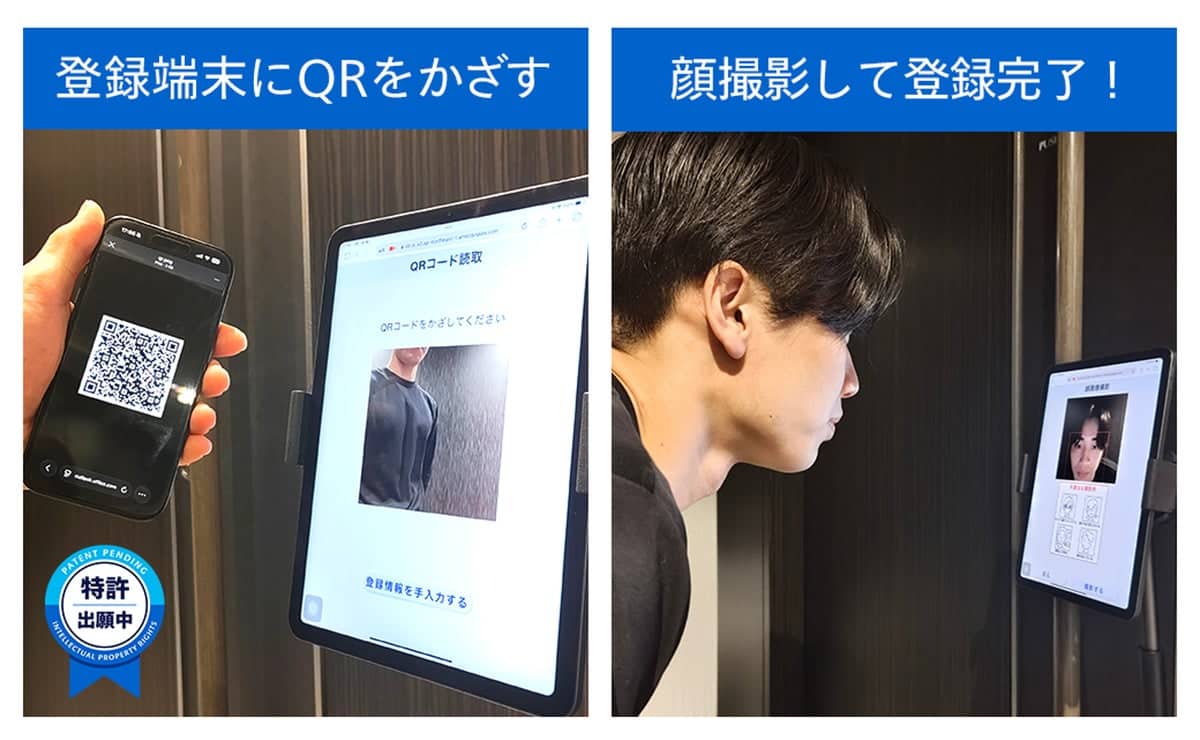

Under the updated workflow, administrators generate a QR code from the management portal and send it to the user by email. On arrival the user scans the QR code at a dedicated terminal and captures their facial image. They then complete registration and gain access to authorized areas.

Panasonic says the QR codes contain restricted identification data that can only be interpreted in authorized environments. This prevents third‑party access and the mechanism is now under patent application, the company said in a post on its website (in Japanese and machine translated into English).

The update is part of a broader plan to expand KPAS Cloud into a unified platform for on‑site operations, according to Panasonic. Future additions will include facial recognition‑based attendance management, door‑level access control and integration with security camera video feeds.

Panasonic says the goal is to consolidate functions that are currently handled by separate systems and provide a more comprehensive digital identity and access management for industrial and logistics environments.

From Pakistan to the U.S. and the Philippines, QR codes are linking up with identity verification. In the U.S., CMS is aiming to modernize patient data access with patients able to take a QR code to the doctor to transfer private and personal details. In the Philippines, a digital civil registration service with QR code verification and face biometrics matching is under way.